Most BI teams don't realize they're not ready to scale until issues show up. A new client, a white-label request, or a large deal can quickly push a setup built for internal use beyond its limits. What once worked fine for a small team starts to break under pressure.

External analytics is a different challenge. Expectations are higher, and problems are visible. A slow dashboard doesn't just frustrate - it damages trust. This guide helps you find gaps early, before scale exposes them.

Why This Matters Now

Analytics is moving outside the firewall faster than most teams expected. According to TDWI's 2026 predictions, the real limit now is data readiness - not tools or models. The question is simple: can your delivery setup keep up with growing demand?

The shift is also economic. Capacity-based pricing is replacing per-user models, lowering the cost of scaling. As B EYE's 2026 research shows, analytics is no longer just dashboards - it lives in spreadsheets, apps, and workflows.

- Analytics demand is growing faster than delivery systems

- Data readiness limits value more than tools today

- Capacity pricing lowers cost but needs a scalable architecture

- Analytics now appears beyond dashboards into daily workflows

- Bottlenecks come from access, governance, and system design

The Core Audit Dimensions

A useful scale-readiness audit covers five interdependent areas. You can't optimise one in isolation - they interact, and weaknesses in one dimension tend to surface as symptoms in another.

1. Access Architecture

Start with a simple question your setup should answer clearly: how does the right user see only the right data, every time? If you can't explain that in one clean flow, there's a gap somewhere. And under scale, small gaps turn into real risks.

Role-based access control is expected, but it's not enough on its own. The real test is where your row-level security lives - inside the model or spread across reports. When it sits at the report level, it becomes hard to manage, easy to break, and risky over time.

Audit questions to ask:

- Is RLS defined once in the semantic model, or replicated across individual reports?

- Can a new client tenant be provisioned without engineering involvement?

- Do external users authenticate via SSO, or are they managing separate credentials?

- Is there an audit log of data access that meets your compliance obligations?

2. Multi-Tenancy Design

Multi-tenancy is where external analytics either stays stable or starts to break. The key question is simple: Is tenant separation built into your system, or handled by process? When it depends on people doing things right every time, it doesn't scale well.

This is where platforms like Reporting Hub come in. They are built for external delivery, with tenant control, branding, and access handled as core features. The difference becomes clear as you grow, especially when managing many clients, which starts slowing everything down.

Audit questions to ask:

- How long does it take to onboard a new external client in the reporting environment?

- Is tenant separation enforced by the platform or by your team's discipline?

- Could a tenant's report accidentally expose another tenant's data? When did you last test this?

- Does your current design support 10x your current client count without a rebuild?

3. Semantic Model Health

The semantic model is the backbone of your analytics setup. It centralizes your business logic, keeping data consistent across users and use cases. When it's done right, it supports clean external delivery, works well with AI tools, and keeps governance simple.

When it's not, things get messy fast. Different reports start showing different answers to the same question, and trust begins to drop. With AI now querying these models directly, any gaps or inconsistencies can scale quickly and create even bigger problems.

Audit questions to ask:

- Are your key business metrics - revenue, retention, churn - defined once in a certified semantic model?

- Is there version control and change documentation on the semantic layer?

- Can external clients trust that the number they see in their portal matches what your team sees internally?

- Is the model documented enough that a new team member - or an AI agent - could correctly interpret it?

4. Licensing and Cost Architecture

Here's something many BI teams miss: per-user licences for external reporting often cost more over time. What looks cheaper at first ends up costing extra in terms of access, compliance, and licence tracking. As you grow, this model starts to slow you down and eat into margins.

The market is shifting away from this approach. Usage-based and capacity pricing are becoming the standard for external analytics. Platforms like Reporting Hub follow this model, so costs stay predictable and don't rise with every new user.

Audit questions to ask:

- What does your current licensing cost per external viewer? What does it cost at 10x scale?

- Is there a cliff in your pricing model where adding a client cohort triggers a tier change?

- How much engineering and admin time is spent managing user licences and access requests each month?

- Does your pricing model create an incentive to restrict analytics access that should be expanded?

5. Governance and Consistency at Client Level

This last part is often ignored because it's uncomfortable: are your clients getting consistent, governed reports, or just whatever was built that week? When delivery isn't controlled, quality starts to vary without anyone noticing.

And that inconsistency shows up quickly. Clients may see different numbers, branding can drift, and refresh schedules become uneven. As highlighted in Atlan's 2025 Forrester Wave Leader positioning, modern governance is about setting clear rules to keep delivery fast, consistent, and reliable at scale.

Audit questions to ask:

- Is report delivery to external clients automated, or does it require manual steps each cycle?

- Are there documented SLAs for data freshness and report availability that clients can hold you to?

- How is your brand applied consistently across client portals - and does it survive report updates?

- If a metric definition changes, how do you update it across all clients simultaneously?

Scoring Your Readiness

Run through the five dimensions above and give each an honest red / amber/green. Red means a known structural weakness that would break under load. Amber means it works now, but with effort you wouldn't want to sustain. Green means it's designed to scale without heroics.

A known structural weakness that would break under load.

Works now, but with effort you wouldn't want to sustain.

Designed to scale without heroics.

One or two ambers are normal; it tells you where to invest next. Three or more reds in the same area usually mean the architecture needs rethinking before you can scale, not just optimising. Two or more reds in access architecture or multi-tenancy warrant treating them as urgent: those are the dimensions where failure is visible to clients and carries the highest reputational cost.

Try to push back on the instinct to treat this as a one-time exercise. Scale-readiness isn't a state you achieve and then maintain passively. Client growth, new data sources, product changes, and AI integrations all shift your delivery requirements continuously. The teams that handle scale well tend to treat this audit as a quarterly check-in, not an annual box-tick.

Where Reporting Hub Fits

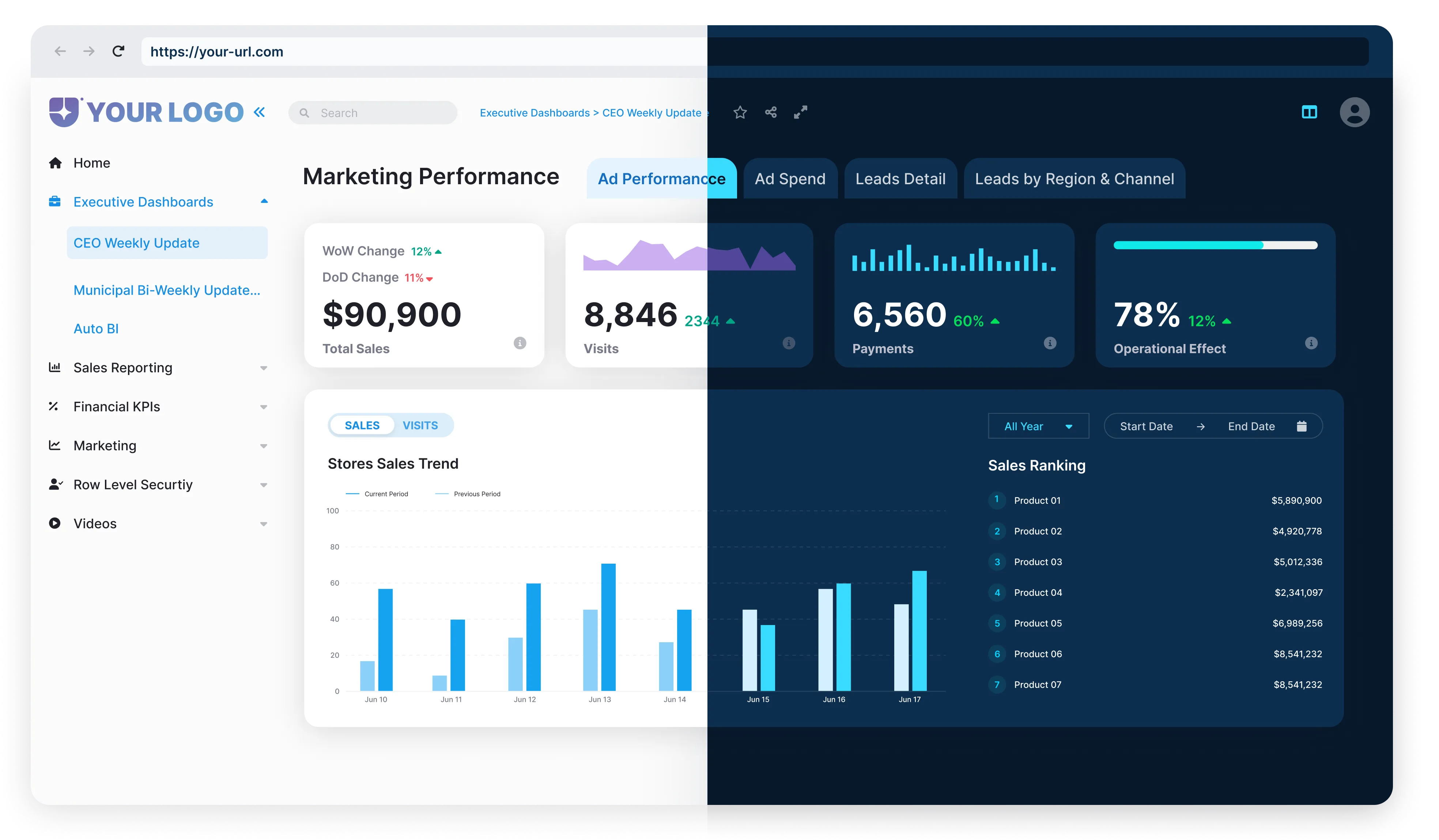

Reporting Hub was designed for exactly this problem space. It's a white-label analytics delivery platform built on top of Power BI. It's purpose-built for organisations that need to distribute analytics to external clients, partners, or customers at scale, without rebuilding infrastructure from scratch or managing the chaos of per-user licensing.

The platform handles branded portal delivery, multi-tenant access management, automated report distribution, and client onboarding as native capabilities - not bolt-ons. For teams scoring amber or red on multi-tenancy design, access architecture, or governance consistency, it directly addresses the structural gaps that prevent scale.

And with BI Genius - Reporting Hub's AI agent layer - those governed semantic models can now power conversational analytics for external clients too. It offers the transparency and auditability that client-facing AI requires.

Start the Audit Before Scale Starts It for You

The organisations that scale external analytics delivery without drama are rarely the ones with the most sophisticated tooling. They're the ones that caught their architectural weaknesses early, before a major client onboarding revealed them in the worst possible way.

Run the five-dimensional audit. Score honestly. Then tackle the reds in order of client-facing impact. The earlier you do this work, the cheaper it is to fix, and the faster you can say yes to the growth opportunities that are coming.

Book a demo, and we'll walk through your current setup.

Sources Referenced