Ask your team a simple question: if 200 customers open a report today, will they all see the same result? Not just the same data, but the same explanation of the same AI-generated summary. In other words, will they all receive the same version of the truth?

For many organizations that deliver analytics externally, the honest answer will be 'No'. You may be able to avoid that inconsistency at first, but over time, it becomes more than a technical issue. It becomes a trust and compliance problem, and eventually a business risk.

- Inconsistent AI explanations create trust and compliance risks.

- The delivery layer, not the data, drives inconsistency issues.

- Internal BI governance does not extend beyond the organisation.

- AI outputs require approval, version control, and traceability.

- Regulators demand audit trails for external AI delivery.

- Governed infrastructure ensures one version of the truth.

The Hidden Cost of Inconsistency

Most organizations that run Power BI internally have already solved the internal consistency problem. Semantic models are standardized. Metrics are defined clearly. Finance, operations, and marketing work from the same definitions of revenue, churn, and performance.

However, once analytics are delivered externally, that internal governance often stops at the boundary. Different customers may receive slightly different versions of reports. AI-generated summaries can vary between sessions.

Enterprise analytics teams often say, "Different customers are getting different AI explanations for the same data." That issue rarely appears in dashboards or monitoring systems until someone raises a complaint.

Where the Problem Actually Lives

This is not usually a data quality problem. The underlying data and models are often accurate and well managed.

The consistency gap exists at the delivery layer. That layer controls:

- What customers see

- How AI explains it

- Which version of the report is active

- Whether any of it can be traced later

Why the Internal-External Divide Creates Risk?

Internal BI systems were designed for internal users. Power BI workspaces assume employees with managed credentials. Governance controls such as Row Level Security and sensitivity labels are built around that assumption.

External delivery was not part of that original design. As a result, organizations often improvise when pushing analytics outward. Some teams export reports and email them as PDFs. Others expose shared dashboards behind a single login.

Some rebuild reports in secondary systems. None of these approaches guarantees consistency at scale.

How AI Makes It Worse

The issue becomes more serious when an AI-generated narrative is layered on top of analytics. AI systems generate summaries, explain variances, and highlight trends dynamically. Without approval workflows or version control, those outputs can vary from session to session.

This creates several immediate risks:

- AI summaries are not guaranteed to say the same thing each time

- There may be no record of what the AI communicated on a specific date

- Legal and compliance teams have limited visibility into external AI output

- Customer disputes cannot be traced back to a definitive explanation

When AI is customer-facing, inconsistency is no longer harmless variation. It becomes exposure.

The Regulatory Pressure Is Increasing

The EU AI Act's high-risk AI provisions take full effect in August 2026. Serious violations can result in fines up to €35 million or 7% of global revenue. The regulation explicitly requires auditability, traceability, and version control for AI systems in regulated contexts.

U.S. regulators are applying similar scrutiny. Banking regulators, such as the OCC, and healthcare regulators, such as the FDA, are increasing oversight of AI-driven systems.

What This Means in Practice

Organizations delivering AI-generated analytics externally must be able to demonstrate:

- What was delivered

- To which customer

- On what date

- Under which version

- With what AI reasoning

Stating that content was reviewed before release is not enough. Evidence must be retrievable. According to IBM's 2025 Cost of a Data Breach Report, shadow AI accounts for roughly 20% of data breaches, adding an average of $670,000 to incident costs.

Most analytics delivery systems were not built to meet these standards. Creating audit-ready logging, approval workflows, and per-customer version tracking internally often becomes a 12–18 month, $200K+ engineering effort.

What Governed Intelligence Delivery Requires?

Solving the consistency problem requires more than policy statements or minor configuration changes. It requires infrastructure built specifically for external delivery.

-

Version-Controlled Analytics

Every customer should receive the same approved version of a report and its associated AI narrative. Updates must propagate centrally without creating drift between tenants. Consistency must be systematic, not manual.

-

Controlled AI Publication

AI-generated narrative should not be published externally without review. Structured approval workflows ensure that summaries and explanations are validated before customers see them. Human oversight must be part of the delivery process.

-

Complete Audit Trails

The system must log:

What each customer received

Which version was active

What the AI explanation included

The exact date and time of delivery

These records must be retrievable for compliance and dispute resolution. -

Tenant-Level Isolation

Customer A must never see Customer B's data, even when both rely on the same semantic model. Per-tenant controls must enforce strict separation while maintaining consistency.

Internal governance focuses on data accuracy. External intelligence delivery requires governance at the experience layer.

How Reporting Hub Addresses the Gap?

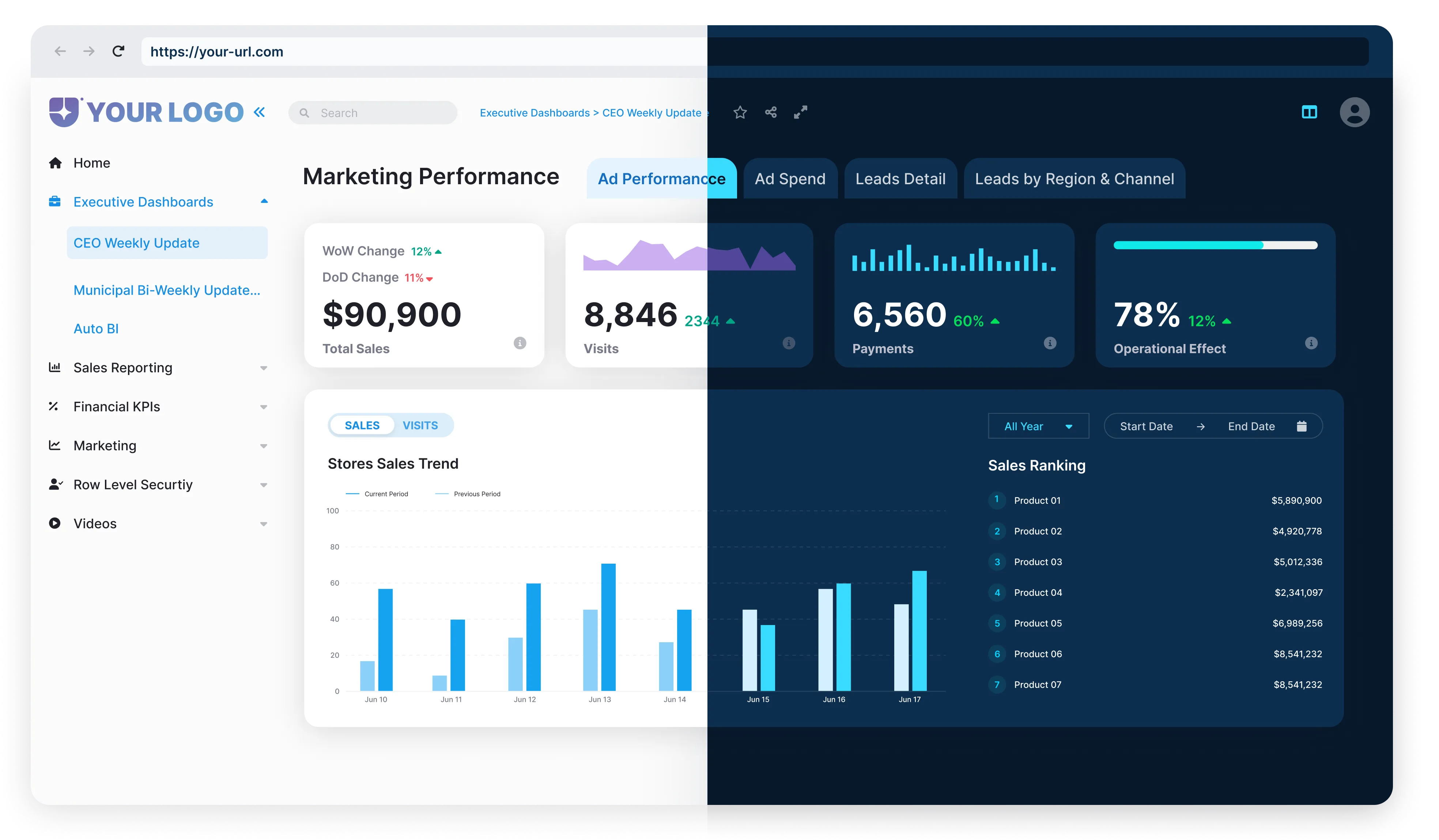

Reporting Hub is designed to bridge the gap between internal analytics governance and external delivery requirements. Built on top of Power BI, it introduces structured orchestration and control without replacing the existing analytics foundation.

At a small scale, teams may attempt to manage consistency manually. But at 100 or 200 customers, manual consistency becomes impossible. Only a systematic governance infrastructure can maintain control.

The Business Impact of Inconsistency

The cost of inconsistency is often invisible until it becomes expensive. It may appear as:

- A customer receiving different explanations for the same metric across reporting periods

- A regulator requesting evidence of what was communicated during a specific timeframe

- A compliance team is blocking AI deployment due to a lack of audit visibility

The decision is not whether governed intelligence delivery is needed. It is whether to build that infrastructure internally or deploy a platform designed for this purpose.

Internal builds often take 12 to 18 months and cost $150K to $300K in initial costs, followed by ongoing maintenance expenses. In contrast, deploying a structured infrastructure enables organizations to operationalise governed delivery within weeks.

Insight generation is increasingly commoditized. AI generation is widely available. Governed, consistent intelligence delivery is becoming the true differentiator.

Final Words

Consistency in external analytics is a trust requirement. When customers receive different explanations for the same data, confidence declines quickly. Governed intelligence delivery ensures that every report, every AI summary, and every interaction is controlled, traceable, and aligned.

At scale, consistency is not optional — it is essential.

- 1. Cost of a Data Breach Report 2025 — ibm.com

- 2. Artificial Intelligence Act, Article 99: Penalties — artificialintelligenceact.eu